Every time you decide to grab a coffee mug, your brain quickly performs an elaborate string of calculations: it visually recognizes the mug, chooses how you will grip it, and commands arm muscles to contract in just the right ways. Neuroscientists generally agree that the brain delegates certain tasks to certain neurons as it quickly completes these calculations, but how it does this is still largely unknown.

With the help of ultrafast lasers, CSHL neuroscientists are recording this brain activity and applying machine learning techniques—math designed for making sense of complex real-world data—to make sense of it. Churchland Lab postdoc Matt Kaufman looks at what happens in a mouse’s brain as it makes relatively simple, sensory-guided decisions, such as indicating whether the rate of a series of sounds is higher or lower than the threshold they were trained to recognize.

“This type of decision making is particularly interesting to me because we can reduce a core cognitive process enough to study exactly how it’s implemented in the brain,” he says.

But to understand how a brain manages tasks within its team of neurons, it’s important to be able to see many individual neurons at once within a living brain—and that’s challenging with traditional microscopes.

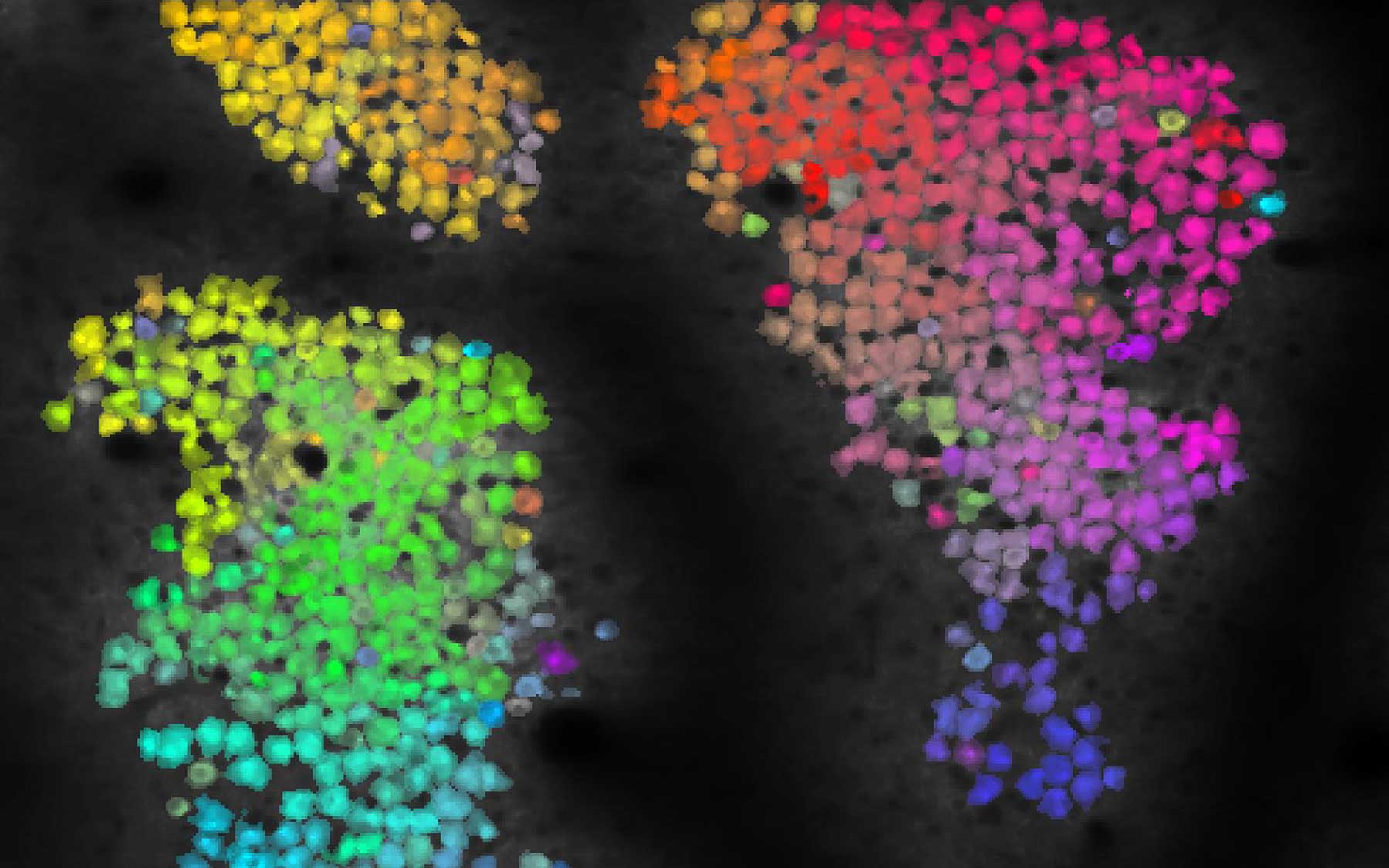

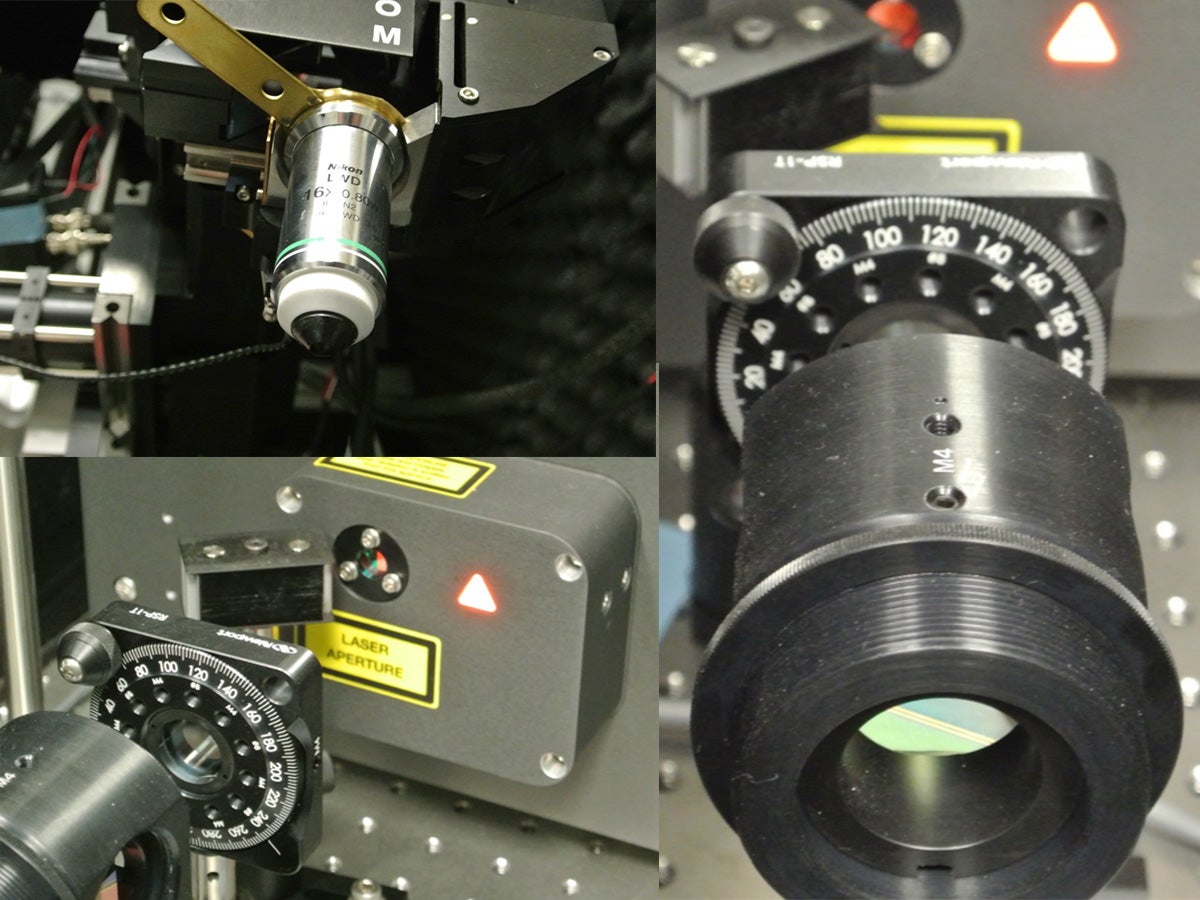

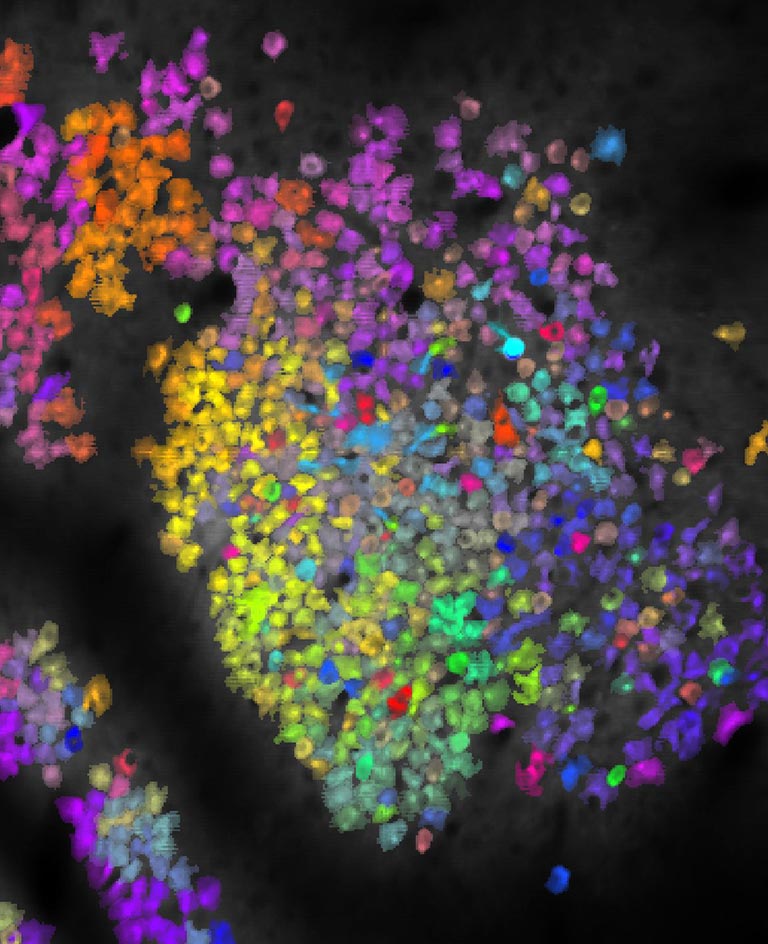

Kaufman obtained the image above using a microscope that scans the brain with ultrafast laser pulses—ultrafast meaning each pulse is just a ten thousandth of one billionth of a second—called a two-photon laser scanning microscope. Each little blotch is an image of an individual neuron, and those that appear in similar colors exhibit similar activity patterns.

“This starts to tell us about the organization of brain areas—which neurons are maybe connected to one another or receiving common inputs—and hopefully will let us figure out where the boundaries of the areas are and what they look like at cellular resolution,” Kaufman explains.

There are two keys to seeing the activity of these neurons. First, the mice used in these experiments express a specially engineered fluorescent protein in their neurons. This protein can light up only when the neuron is active, allowing the researchers to detect each individual neuron’s activity, but it still needs a power source.

The second key is infrared light, which provides this power and can travel farther into brain tissue than the blue light that typical microscopes use. When two infrared photons—light particles—hit one of these flashing molecules at exactly the same time, their combined energy provides the fuel that these molecules need to light up.

Getting two photons to hit the same molecule at the same exact time means utterly bombarding an area with photons. In other words, you need a really, really bright laser. The problem is, those tend to set things on fire. But by using “ludicrously” brief laser pulses, as Kaufman puts it, the total heating is kept to a safely low level.

“So you image all these neurons lighting up and then you say, ‘Ok, so we’ve got a bunch of flashing neurons. What on Earth does this activity mean? How does the animal use this activity?’” says Kaufman.

That’s where neuroscientists have gotten some help from the tremendous advances in machine learning achieved in recent years. Engineers and mathematicians have devised methods for interpreting large amounts of information from complex systems, and these techniques provide an entry point for understanding how the brain carries out the calculations needed to interpret sensory inputs, make decisions, and control movement.

Neuroscientists have only started using these microscopes to image active brains in the past few years, but several CSHL labs already use them in combination with fluorescent proteins to study brains in action. With the help of these ludicrous lasers and insights from machine learning, Kaufman and others in the field hope to shed light on the dark inner workings of the brain.

Read more about how neuroscientists are using machine learning techniques to understand the brain.